We integrated our project management SaaS with the OpenAI solution ChatGPT and needed a test case. What’s easier than making a peanut butter sandwich? A goal so simple, most humans, regardless of age or disability, can safely traverse the basic required steps.

During the beta release of our SaaS, we received feedback that our solution was incomplete without risk management. So naturally, common sense would dictate returning to our peanut butter sandwich test. The results were surprising and we learned a lot.

HUMAN VS MACHINE

The age-old competition. In one corner, we have a PMP-certified project manager, i.e. a human. In the other corner, we have ChatGPT, a billion-dollar artificial intelligence engine. They both analyzed the risk involved in making a peanut butter sandwich and came up with different answers.

We are excited to bring you this new iteration of the famous man VS machine battle. I’ll take you into the mind of the human and the documented results of our FolioProjects SaaS. So, without further delay, let’s look at the high-level summary of the results

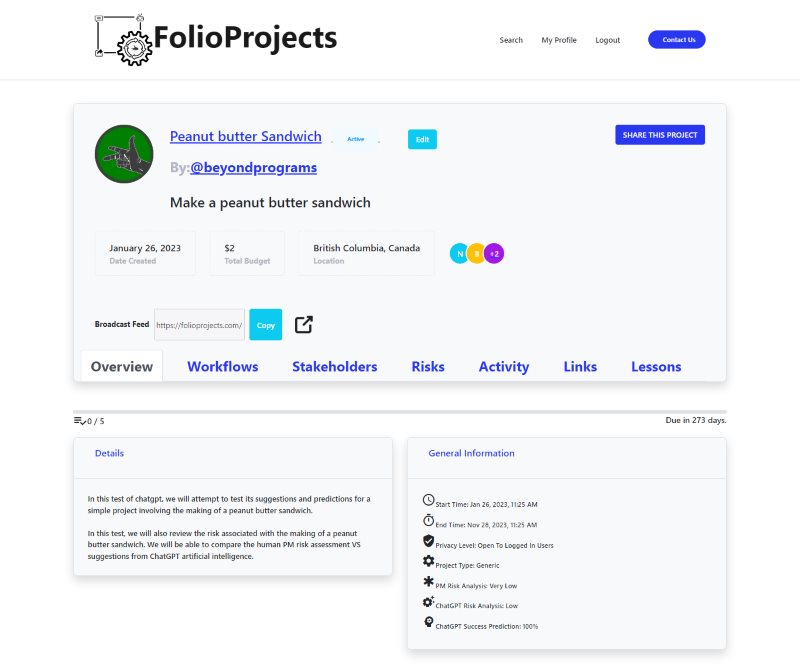

As you can see, the PM has rated the project as very low risk, as I assume most of our readers have. However, although this was an option provided to ChatGPT, it selected “low risk”. This is where you raise your eyebrow in curiosity and dismay. Of course, the machine is confused with the random stuff it must be finding online. An example we thought of situations not to trust AI over a human. Is that really the case though?

Luckily, we also capture a detailed assessment from ChatGPT regarding what it thinks the risks involved are. However, it’s only fair for us to set the scene, so you understand how we interface with ChatGPT.

FolioProjects & ChatGPT INTEGRATION

FolioProjects requires a basic project to be created with a few details including workflow items before engaging ChatGPT. This forms the basis of context, so the AI engine stays focused during our engagement.

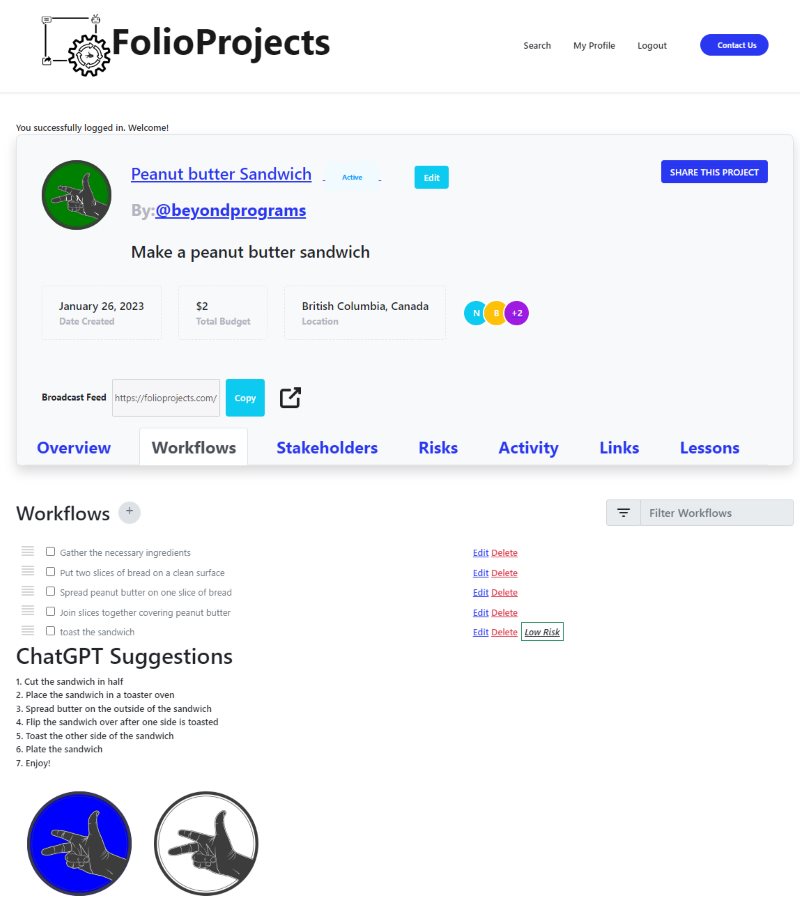

The AI engine makes suggestions of workflow items that will improve the likelihood of success of the project. The human adds these suggestions to the project if they make sense.

ChatGPT AI also has enough information to assess the risk of the project and provides some details. Again, the PM can review the suggestions and make adjustments.

The ChatGPT integration provides the following details:

- Likelihood of success

- Risk level

- Risk Assessment

Note that even with the opportunity to make changes, the risk assessment of the human remains different from the artificial intelligence. Is this hubris, ignorance, pride, or something else?

MIND OF THE HUMAN

During the beta test, we received the ChatGPT workflow suggestion to toast the peanut butter sandwich. This ChatGPT suggestion made sense, so we added it to the workflow.

In my mind, I thought, theoretically there was some risk of fire there. However, it was so negligible in my mind, that I failed to add it. Instead, I let ChatGPT assess the risk first.

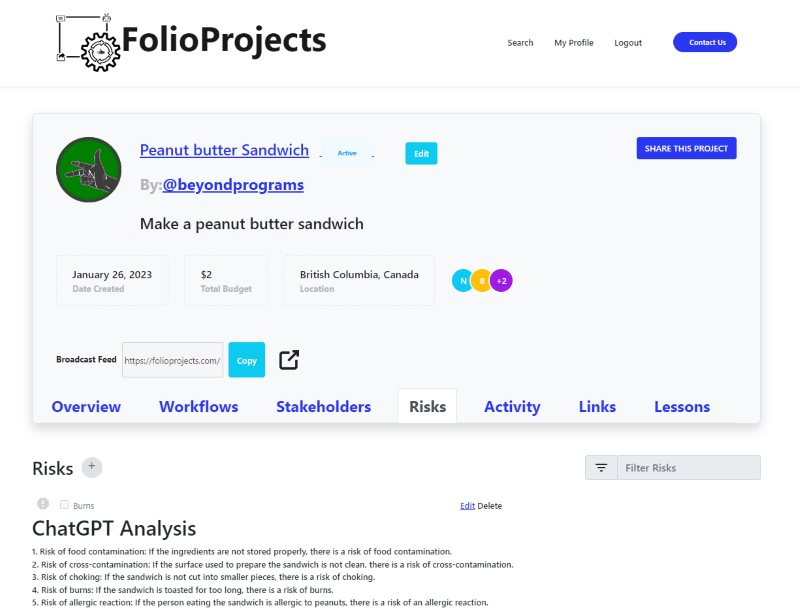

After reviewing the detailed assessment from ChatGPT, I noticed that it included burns as its #4 point. I thought that I should accept this assessment from ChatGPT, and added it to the risk registry for the project, targeting the toasting workflow item in the risk.

I figured that since ChatGPT listed the item in the #4 spot, and provided the summary decision of low risk, that ChatGPT also didn’t consider the suggested items as big concerns.

I initially choose to ignore the other risk items. In my mind, the machine was right to suggest them, but I didn’t think they reached the threshold of importance to stakeholders. For example, ChatGPT flagged cross-contamination from the surface as an issue; however, the workflow clearly dictates the surface should be clean.

However, while writing this article, I had to accept that all the risk suggestions should be added to the project. I accepted the “burns” suggestion because I already had it in my mind as an option. The others I refused to accept because of my own biases.

AI RISK ASSESSMENT

The reality is that ChatGPT didn’t have any of my built-in biases. I thought to myself, isn’t choking a risk every time a human eats food? I have never seen it listed on a single food package. However, the failure of others cannot be an excuse.

The reality is that at the very least, the team creating the product should know the risk exists. This would allow them to potentially make alterations like cutting the sandwich into smaller pieces. You may scream blasphemy, but the risk should be logged.

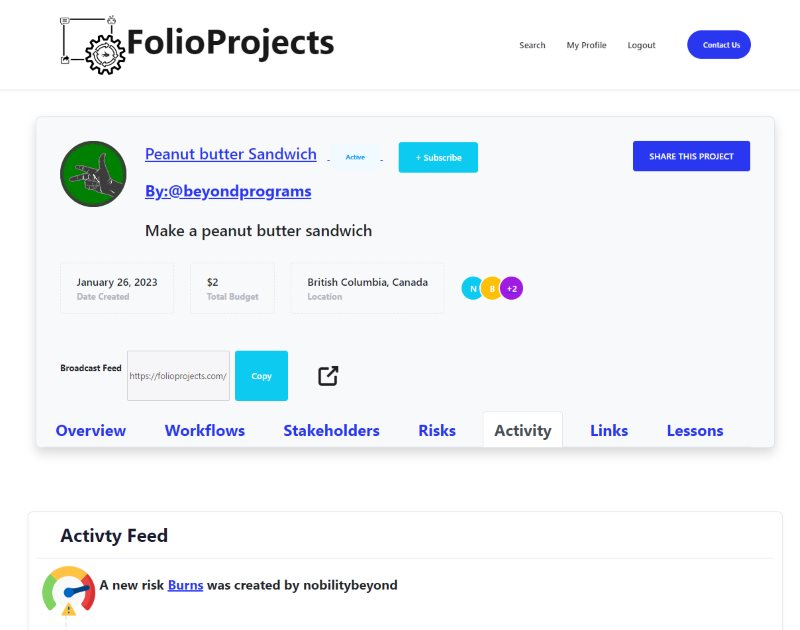

FolioProjects documents everything. It captures the fact that AI was engaged, then the PM made a change to the risk registry. Others in the project can review this decision and communicate with the PM about that decision.

As a group, we may decide that choking isn’t important in a particular case. However, if the sandwich is being given to a group of elderly people, maybe another approach is critical. The truth is that we have failed to provide enough details about the project, so the risk item should be logged and communicated with stakeholders.

So in reality, 5 valid risk suggestions cannot equate to “very low” risk. Some risks need to be mitigated or acknowledged.

CONCLUSION

We anticipate that the results have been as much of a surprise for you as it was for us. If you thought of all the risks ahead of time, you are better than most.

The ability to consider risks that are foreign to your situation is very difficult. This is literally the value of experience and maturity. However, the gap has been rendered moot by artificial intelligence.

So in the never-ending battle of machine VS human, in this case, it seems like the machine beat our Project Management Institute #PMP certified team at Beyond Programs Ltd. Or do you feel differently?